Introduction to 3D Rendering

How do we create images of virtual worlds?

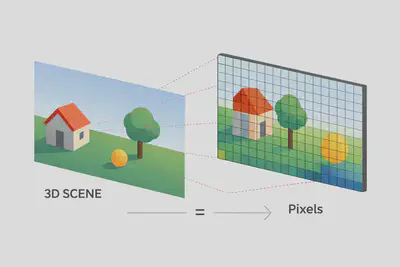

What is Rendering?

Rendering answers a simple question:

For each pixel on the screen, what color should it be?

- An image = a grid of pixels

- Rendering = computing the color of each pixel

- This depends on:

- Geometry of the scene

- Materials of objects

- Light sources

- Camera position

Two ways to think about rendering

1) Image-first (modern view)

For each pixel:

- What light reaches the camera?

- How does the surface reflect it?

- How do we approximate this efficiently?

2) Geometry-first (historical view)

Transform 3D objects → project → shade → display.

In this course, we start from the image-first view.

Part I — Cameras and Projection

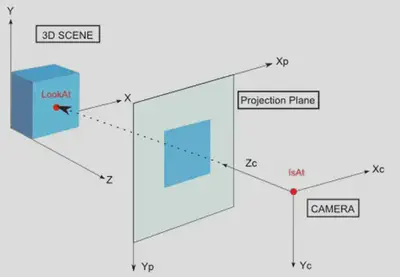

Virtual Camera

A virtual camera is defined by:

- Position (

isAt) - Viewing direction (

lookAt) - Up vector

- Field of view (FOV)

Projection: 3D → 2D

We must map 3D points to 2D pixels.

Two main types of projection:

- Parallel (orthographic) projection

- No size change with distance

- Useful for technical drawings, CAD, UI

- Perspective projection

- Distant objects appear smaller

- Matches human vision and photography

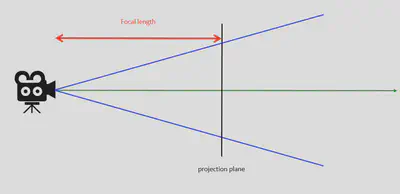

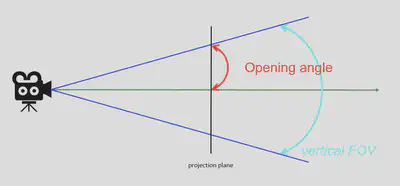

Perspective and Field of View

Key parameters:

- Focal length (camera metaphor)

- Field of View (FOV):

- Horizontal FOV

- Vertical FOV

Smaller focal length → wider FOV

Larger focal length → narrower FOV

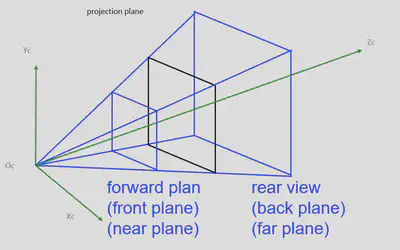

The View Frustum (View Pyramid)

The camera sees only what lies inside a 3D volume:

- Near plane

- Far plane

- Left, right, top, bottom planes

This defines what can potentially appear on screen.

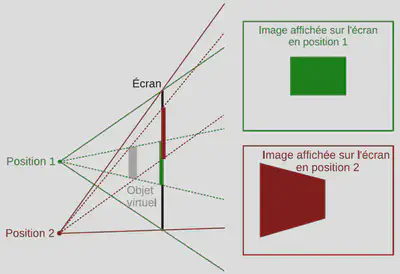

Head Tracking and Dynamic Frustum

In VR / AR:

- The frustum deforms with head position

- It is defined by:

- The four screen corners

- The viewer’s eye position

This enables correct perspective for each eye.

Part II — From 3D to Pixels: Rasterization (Modern GPU View)

Two Families of Rendering

Rasterization (real-time, GPUs)

- Used in games, VR, AR

- Very fast

- Approximates light behavior

Ray Tracing / Path Tracing (physically based)

- Traces rays of light through the scene

- More realistic reflections, shadows, global illumination

- Historically slow → now accelerated with modern GPUs

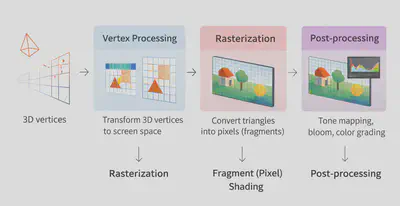

The Modern Graphics Pipeline (Simplified)

Vertex Processing

- Transform 3D vertices to screen space

Rasterization

- Convert triangles into pixels (fragments)

Fragment (Pixel) Shading

- Compute color using materials and lights

Post-processing

- Tone mapping, bloom, color grading

Visibility: What do we actually see?

Before shading, the GPU determines visibility:

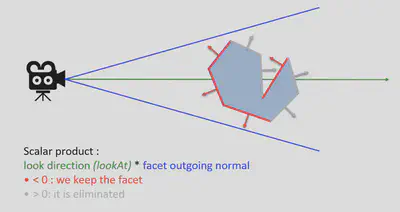

Back-face Culling

- Triangles facing away from the camera are discarded

- Saves computation

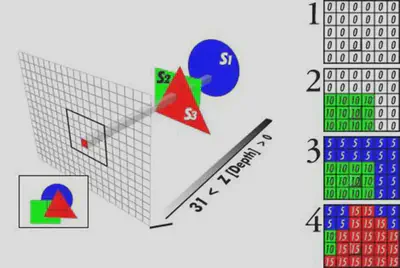

Depth Buffer (Z-Buffer) — in practice

For each pixel, we store the depth of the closest surface.

If a new fragment is farther away → it is discarded.

This solves hidden surface removal efficiently.

Practical issues with depth

Common problems in real engines:

- Z-fighting: precision issues when surfaces are very close

- Overdraw: many fragments drawn but not visible

- Transparency sorting: complex with alpha blending

(These are key modern graphics concerns.)

Part III — Materials and Lighting (Modern Approach)

How light creates color

At each point on a surface, light interacts with the material:

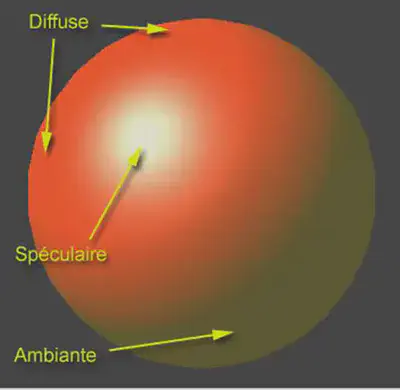

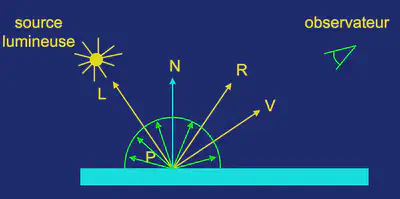

Three intuitive components (historical model):

- Ambient

- Diffuse

- Specular

Ambient lighting

Each object is shown with an intrinsic intensity:

- No external source

- Unreal universe of non-reflective light objects

Diffuse lighting

Part of the incident light enters the object and exits with the same intensity in all directions

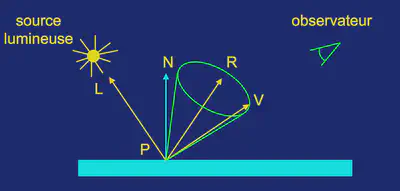

Specular lighting

Reflection from the surface of the object of incident light that has not penetrated the object, depending on the direction of observation

From Phong to PBR (Modern Materials)

Modern engines use Physically Based Rendering (PBR) instead of classic Phong.

A PBR material is typically defined by:

- Albedo (base color)

- Roughness (matte vs shiny)

- Metallic (metal vs non-metal)

- Normal map (fake surface detail)

This ensures:

- Realistic lighting

- Consistency across different light conditions

Why PBR is better

With Phong:

- Energy is not conserved (objects can appear “too bright”)

With PBR:

- Energy is conserved

- Materials look correct under any lighting

Surface Smoothing

To avoid “faceted” appearance:

Gouraud shading

- Interpolate colors across triangles

Phong shading

- Interpolate normals, compute lighting per pixel

What is a Material?

In practice, a material includes:

- Base color

- Roughness

- Metallic

- Normal map

- Transparency (alpha)

- Emissive color (self-illumination)

Part IV — Textures (Essential for Realism)

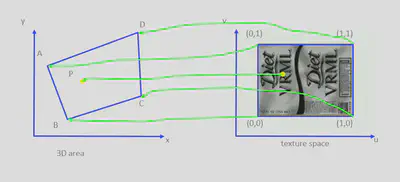

What is a Texture?

A texture is a 2D image mapped onto a 3D surface.

Each vertex gets UV coordinates (u, v) in [0,1].

For each pixel, texture color is interpolated.

Beyond Color: Modern Texture Maps

Today, textures are used for much more than color:

- Normal maps → fake fine detail

- Roughness maps → control shininess

- Metallic maps → define metal areas

- Ambient occlusion maps → fake soft shadows

Part V — Modern Rendering Techniques

Shadows

Most real-time engines use shadow maps:

- Render the scene from the light’s point of view

- Store depth information

- Use it to determine shadowed areas

Global Illumination

Two main approaches:

Baked GI (offline)

- Precomputed lighting

- Very stable, cheap at runtime

Real-time GI

- Dynamic lighting updates

- Used in modern engines (Unreal Lumen, Unity HDRP)

Screen-Space Effects

Computed directly from the final image:

- SSAO (soft ambient shadows)

- Screen-space reflections

- Motion blur

- Bloom

- Tone mapping

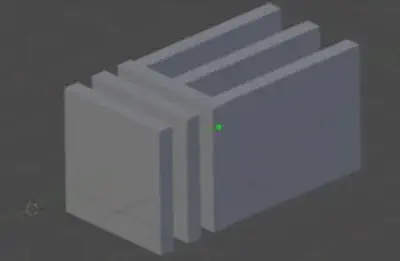

Stereoscopic Rendering (VR)

For VR, we render:

- One image per eye

- Two slightly different cameras

- Two frustums

- Two rasterization passes

Smart engines share work between both eyes when possible.

Conclusion

Modern rendering combines:

- Fast rasterization for real-time

- Physically based materials (PBR)

- Smart approximations (shadow maps, GI, screen-space effects)

- Increasing use of ray tracing for realism