Perception of Affordances and Embodied Interaction in Augmented Reality (PAEIAR)

Abstract

Augmented Reality (AR) reshapes how humans perceive and act in the world by overlaying digital information onto physical objects. While AR technologies are rapidly evolving, the way these augmentations transform the perception of affordances—the action possibilities offered by objects—remains poorly understood.

This project investigates how visual and spatial cues introduced through AR modify the perception of affordances and how these modifications can influence user behavior and gestures. In hybrid environments where physical and virtual properties coexist, objects may invite new actions, inhibit existing ones, or generate entirely new forms of interaction.

The project is based on the hypothesis that AR does not simply add virtual information to the physical world but creates mixed affordances, emerging from the integration of real and virtual cues. Understanding these hybrid affordances is essential for designing intuitive and perceptually coherent AR interfaces.

To address this challenge, the project combines experimental psychology, human–computer interaction, and augmented reality engineering. It will develop experimental protocols to measure perceptual changes, investigate how different interaction techniques reshape affordance perception, and design visual strategies capable of guiding user gestures in real-world tasks.

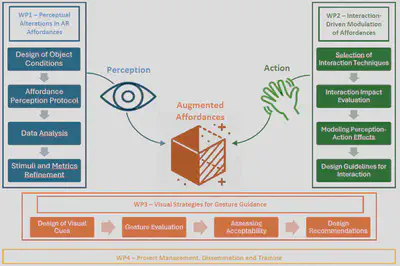

The project is structured around three research axes: characterizing how AR modifies affordance perception, studying how interaction techniques modulate this perception, and designing visual cues that guide user gestures through augmented affordances.

By providing conceptual models, empirical data, and design guidelines, the project aims to advance embodied interaction in AR and contribute to the development of perceptually grounded, intuitive mixed-reality interfaces.

People involved

Project leader

Collaborators

Phd Student

Research structure

The project is organized around three main research work packages.

WP1 — Perceptual Alterations in AR Affordances

This work package investigates how visual modifications introduced by AR influence users’ perception of objects and their associated action possibilities. Experiments will compare perception and interaction with real objects, subtly augmented objects, and strongly modified augmented objects.

WP2 — Interaction‑Driven Modulation of Affordances

This work package studies how different interaction techniques—such as direct manipulation, tangible interaction, or indirect control—reshape the way users perceive affordances in AR environments. The goal is to understand how action itself modulates perception.

WP3 — Visual Strategies for Gesture Guidance

This work package develops and evaluates visual augmentations that guide user gestures by altering perceived affordances. The aim is to design AR cues that subtly steer interaction without explicit instructions.

Together, these work packages address the full perception–action loop in augmented environments: from perceptual interpretation of augmented objects to gesture execution guided by AR cues.

Expected outcomes

The project will produce:

- experimental protocols to measure affordance perception in AR

- datasets and behavioral analyses of perception‑action dynamics in hybrid environments

- taxonomies of visual cues capable of modulating perceived affordances

- design guidelines for perceptually grounded AR interfaces

These outcomes will contribute to both fundamental research in perception and embodied cognition and to the design of practical AR systems.

Impact

In the short term, the project will generate validated experimental methods and empirical data on how AR influences perception and action. Results will be disseminated through publications in leading venues such as IEEE VR, ISMAR, and CHI, as well as through open repositories.

In the medium term, the project will support the design of more intuitive AR interfaces, reducing the cognitive barriers faced by users unfamiliar with immersive technologies.

In the long term, the project contributes to a shift from control‑centric interfaces toward perception‑guided interaction, where user behavior is guided by perceptually meaningful visual cues rather than explicit instructions.

Funding

This project is funded by the French National Research Agency (ANR) under the AAPG 2025 program (JCJC scheme).

Duration: 42 months